[10:57 Thu,3.December 2020 by Thomas Richter] |

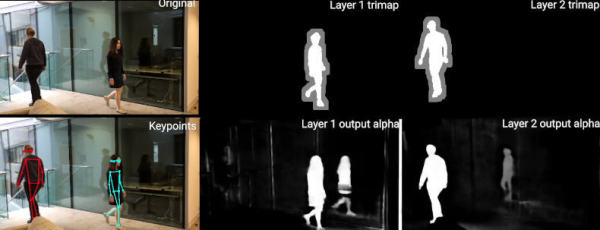

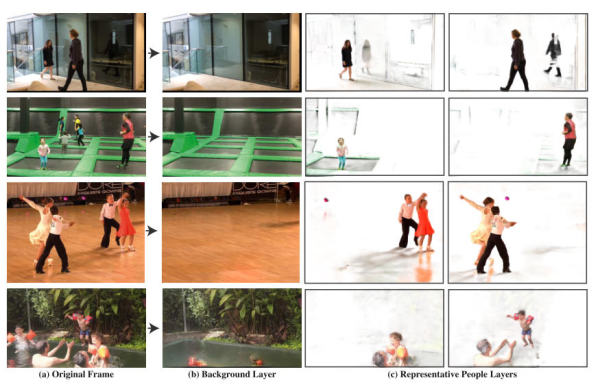

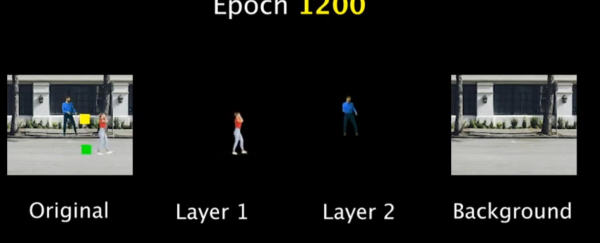

Researchers from Google and the University of Oxford have presented an interesting new algorithm for manipulating moving people in videos. The algorithm, which is based on neural networks, recognizes different moving objects in a video and assigns them to different layers - this makes a variety of interesting effects possible by manipulating the individual layers in relation to each other.  For example, different objects (including motion-correlating image objects such as their shadows and reflections!) can be removed without trace, duplicated, frozen or retimed and synchronized with each other. In the example video, some chaotically jumping persons on different trampolines become a group jumping to the beat. The neural network performs a multitude of complex classical compositing actions: it reconstructs, for example, a hidden person walking past in the background; it assigns to each person the shadows and reflections belonging to him or her and generates a mask from this, and it even separates two temporarily overlapping shadows into individual representations.  The method allows the very easy creation of moving masks / layers of persons including their correlating tracks in the image and their simple manipulation (like retiming) in a compositing program. The possibilities of intelligent, object-based video editing and compositing using such an algorithm are enormous: for example, the movements of people in a video can be easily and completely changed afterwards - for the purpose of better image composition. And the whole thing also works with a moving camera.  Layer of people generated from videos The downside is still the very low video resolution of 448 × 256 pixels - in order to be used in a professional environment, the algorithm would have to be further optimized or run on stronger hardware, but the research project is always a feasibility study and it is only a matter of time until an AI algorithm that is interesting for practical applications is developed to general usability.  Training In the video of the project it is nicely explained how the neural network is trained to recognize moving objects including correlated effects and to create its own layer out of them - this also nicely illustrates the completely different approach of Deep Learning in contrast to traditional programming. Here the presentation of the method by Two Minute Papers: The paper  Compositing using the generated layers deutsche Version dieser Seite: KI ermöglicht automatisches Layering von bewegten Video-Objekten |