[15:15 Sat,16.April 2022 by Thomas Richter] |

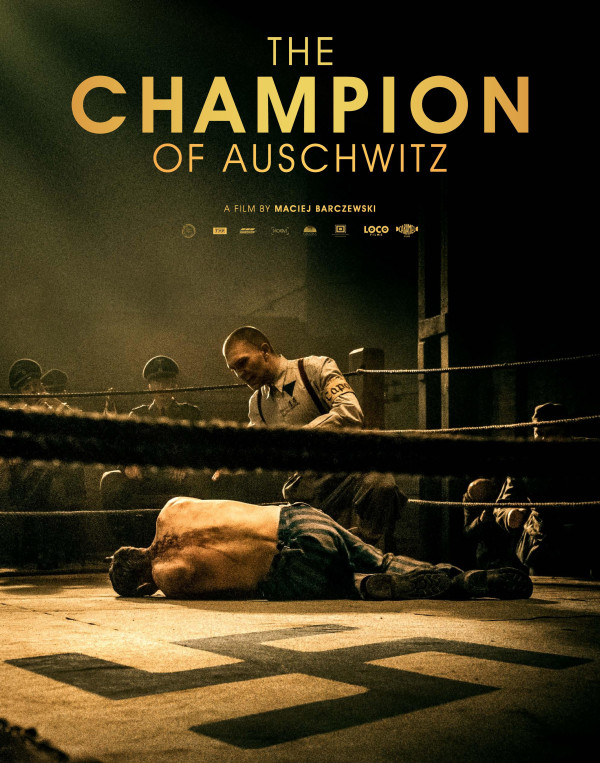

Films in foreign languages always have a big disadvantage compared to films shot in the local language, because they either have to be subtitled, which many viewers find distracting from the action, or they have to be elaborately dubbed by dubbing actors, i.e. the original voices are replaced by speakers who repeat the original dialogues in the local language - if possible lip-synched. The advantage of this is that there is no distracting text to read at the same time, but the disadvantage is that it is no longer the original voice of the actor(s) and the new speech text may not be lip-synchronized. In addition, the whole thing is quite expensive, i.e. it is only worthwhile for larger films/markets if a sufficiently large success is expected to recoup the expenses for the dubbing.  New developments in the field of DeepLearning promise a solution here, so the facial expressions and lip movements of the actors can also be adapted to match the voice of the dubbing actors The Champion Trailer: The Polish film "The Champion of Auschwitz" (2021) by director Maciej Barczewski is now the first feature film to be dubbed using a similar method. Here, however, there is no complete reliance on a DeepLearning solution that first learns and then synthesizes the typical mouth movements of actors from film footage (as with Flawless AI), because here the actors themselves have to re-speak the dubbing text and are filmed doing so. Using a digital model of the face determined thanks to data from this performance, the algorithm can then change the images in the finished film to match the actor&s facial and lip movements to the new dub text.  Digital face model to adjust facial features The technique for this, christened the "Platon Process," was developed by The "Plato Process" consists of a series of interwoven algorithms and is based on machine learning and  Capturing in the studio for dubbing We see this technology as a first step, the further development stages are already marked out, since the methods necessary for it already exist and must be improved only so far in their quality that their results can exist also on a large screen. In the "Plato Process", for example, the necessity for the actors themselves to record the dubbed text prevents this from being done in several languages that are completely foreign to them, so that the result does not sound too strangely dialectal. Also, the recording session required for the new audio and the capturing of the facial expressions is still quite costly.  One alternative, for example, is offered by Klar ist, daß die entsprechenden Technologien sich schnell entwickeln und das Dubbing von Filmen und damit die Möglichkeit, Filme auch in anderen Ländern und damit in einem noch größeren Markt anzubieten weiter vereinfachen, verbilligen und beschleunigen wird und somit eine große Chance für Filmemacher darstellen. Gerade auch weil Filme mit Untertiteln bei vielen Zuschauern nicht sehr gerne gesehen werden und Dubbing, vor allem auch wenn es in der Originalstimme erfolgt, den Film besser erfahren läßt. Bei fortschreitendem Einsatz solcher Technologien wird allerdings auch ein Problem entstehen: Synchronsprecher bzw. Schauspieler die auch vom Dubbing von Filmen leben, könnten immer mehr Jobs durch solche Algorithmen verlieren. In Zukunft könnte der ganze Prozess per KI für viele Videos auch ganz automatisiert werden (zum Beispiel von YouTube), angefangen von der automatischen Erkennung von Sprache, über die Übersetzung, der Synthetisierung der Stimmen in einer neuen Sprache samt Anpassung der Lippenbewegung, um das ganze natürlicher aussehen zu lassen. (Danke an Frank Glencairn). deutsche Version dieser Seite: KI hilft beim Nachsynchronisieren von Kinofilm "The Champion" |