[10:56 Fri,28.October 2022 by Thomas Richter] |

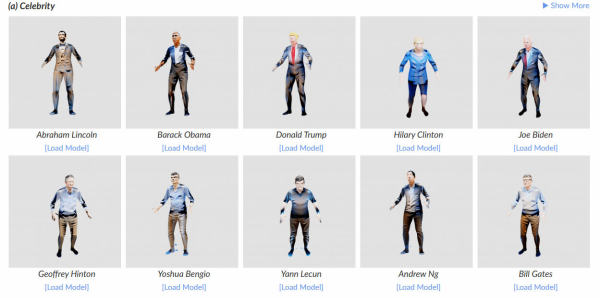

New AI algorithms are currently revolutionizing the creation of images, videos and even 3D models, making them more accessible than before. The just introduced AI AvatarCLIP now also allows the creation and animation of 3D avatars using only text input. Thus, unlike professional software that requires expert knowledge, AvatarCLIP allows you to generate a 3D avatar of any desired shape and texture and then control its movements - using only text commands. The process is done in three steps: first a rough body shape is generated after text description (in the clip, for example, "a very thin man"), then the appearance (details of the body of the as well as clothing) is defined ("a ninja"), and then finally a movement ("throw basketball"). Bodies can be generated based on famous personalities ("Barack Obama") as well as fictional characters ("Iron Man") or according to a general description (such as "gardener" or "wizard"). Here is the AvatarCLIP In the future: automatically generated animated movies?As always, this work is still early and mainly shows that the proposed method basically works - texture errors and other inconsistencies will be improved in future versions. However, it demonstrates how easily three-dimensional avatars of arbitrary persons could be generated and animated in the future - the final goal would then be the automatic generation of entire animated movies based only on text descriptions, including interactions of the characters with each other and the also automatically generated environment and its objects.  Avatars of famous personalities generated by AvatarCLIP Human Motion Diffusion Model: text-to-motion.Another new AI that is also interesting for users working with 3D models and animations is Tel Aviv University&s deutsche Version dieser Seite: AvatarCLIP: Neue KI generiert und animiert 3D-Avatare per Textbeschreibung |