[15:22 Sun,4.April 2021 by Thomas Richter] |

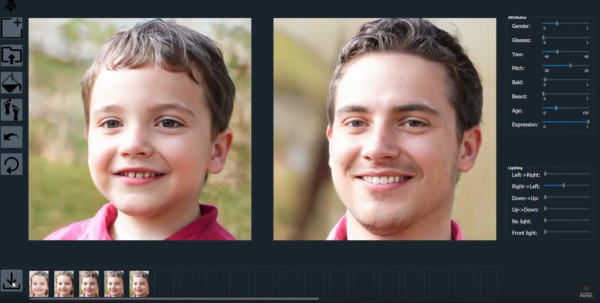

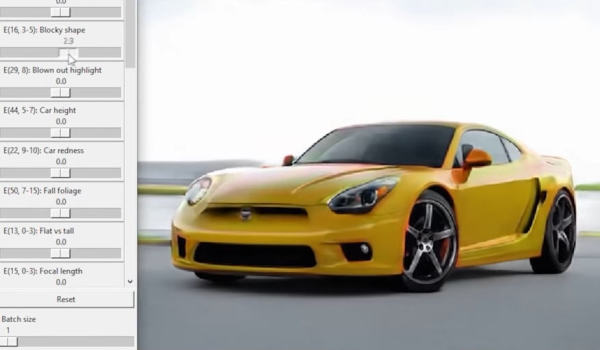

In this video, Károly Zsolnai-Fehér from Two Minute Papers introduces some interesting new possibilities of semantic photoediting via Ki, which has become possible through new developments. So far, neural networks could learn to not only recognize an object - be it a face, a car or a cat - but also to generate it freely by training with many thousands of photos, but so far the possibilities to influence certain parameters during this generation were very limited. It was possible to morph from one image of an object to another, but the algorithm could not distinguish, for example, between foreground and background or certain aspects of the object. This is now possible with the help of a new method called GANSpace - for example, a face can be manipulated based on simple parameters such as age, gender, facial expression, lighting or viewing angle, or glasses or a beard can be added (or an existing one removed) - dynamically and interactively simply by using a slider.  Faces manipulated by AI Editing Analogously, photos of cars can be generated that look more or less sporty or beefy - furthermore, different aspects of the background can be selected, should the car stand on grass or in autumn leaves?  Regenerate cars freely Likewise, the character of portrait paintings can be changed in detail, such as the type of brush strokes, painting style, face color or orientation of the face. We can hardly wait until such possibilities of object-based semantic editing opened up by DeepLearning are also available for videos. We had already thought about this in our article deutsche Version dieser Seite: Alter, Geschlecht oder Mimik eines Gesichts per KI Photo Editing ändern |