[13:21 Wed,1.December 2021 by Thomas Richter] |

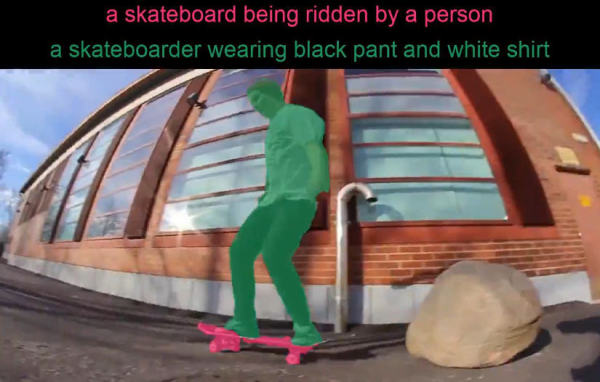

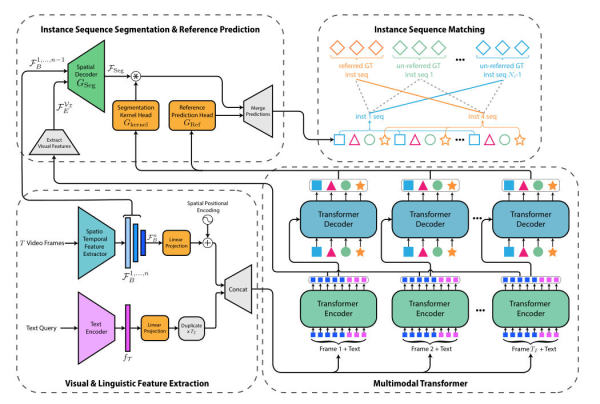

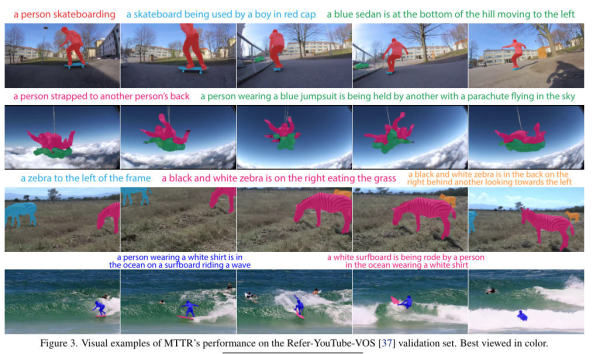

This new Deep Learning algorithm from a team in Israel does something very interesting for video editing: based on a simple description of an object in a video in the form of a short sentence, it recognizes the object and exposes it via mask.  The description of the desired object can be quite complex and can also describe it by dynamic relations to other objects or its position in space, like "a man in a white T-shirt and blue pants riding a surfboard", "a big monkey playing with a baby monkey", "the zebra in the back on the right standing behind another one looking to the left" or "a person on a motorcycle".  To do this, the AI algorithm performs a whole series of complex tasks from the areas of text and video understanding: first it must "understand" the input text, then it must correctly recognize all the objects in a video, including dynamic relationships, and identify the correct object based on the description given by the user (including its properties, such as its color and relationships to another object, such as "the tennis racket in the hand of the player with the, red shirt"). Then the object must be separated from the background and tracked across all frames in which it appears, forming a dynamic mask from it - even as it changes appearance through movement and perspective changes. This no longer has to be adjusted by hand afterwards. Even dynamic actions that span a video sequence are correctly detected in a longer video, such as "the hand giving the dog a ball."  The new algorithm demonstrates quite vividly the complex tasks that can now be accomplished by combining different Deep Learning methods. It could be used, for example, to find specific objects in a video archive together with their relationship to other objects and to extract them. As always, the corresponding deutsche Version dieser Seite: Videomasken einfach per Beschreibung definieren durch neuen KI-Algorithmus |