[10:19 Mon,24.June 2019 by Thomas Richter] |

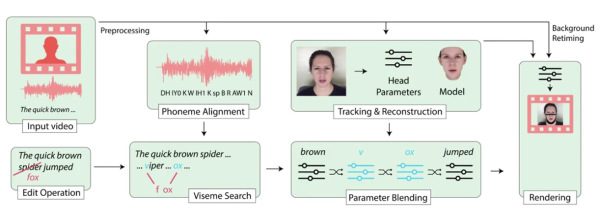

A new deepfake algorithm will soon give filmmakers and editors completely new freedom when editing clips with spoken words. If only a new take could save a scene in which an actor missed or missed a spoken word, the new post-production method would make it easy to make far-reaching changes to the spoken text. A prerequisite for convincing work with the algorithm is a minimum of 40 minutes of training material from the speaker and a transcript of the spoken words (which, however, can also be generated automatically by increasingly better tools). Based on the training material, the algorithm learns which facial expressions a speaker makes when speaking each phonetic syllable and how he pronounces them - the basis for the synthetic pronunciation of new words. In tests with 138 participants, the manipulations were classified as "real" in almost 60 percent of the cases. The visual quality of the new passages is so good that it comes very close to the original, but there is still plenty of room for improvement.  In addition to the obvious benefits for professional video work, such as the subsequent modification of dialogues without new recordings, such a simple manipulation option can of course also be misused to fake videos. Although algorithms are also being developed to detect such fakes - be they images, videos or audio - deutsche Version dieser Seite: Per Texteditor die Worte eines Sprechers in einem Video verändern // Siggraph 2019 |