[09:32 Sun,14.May 2023 by blip] |

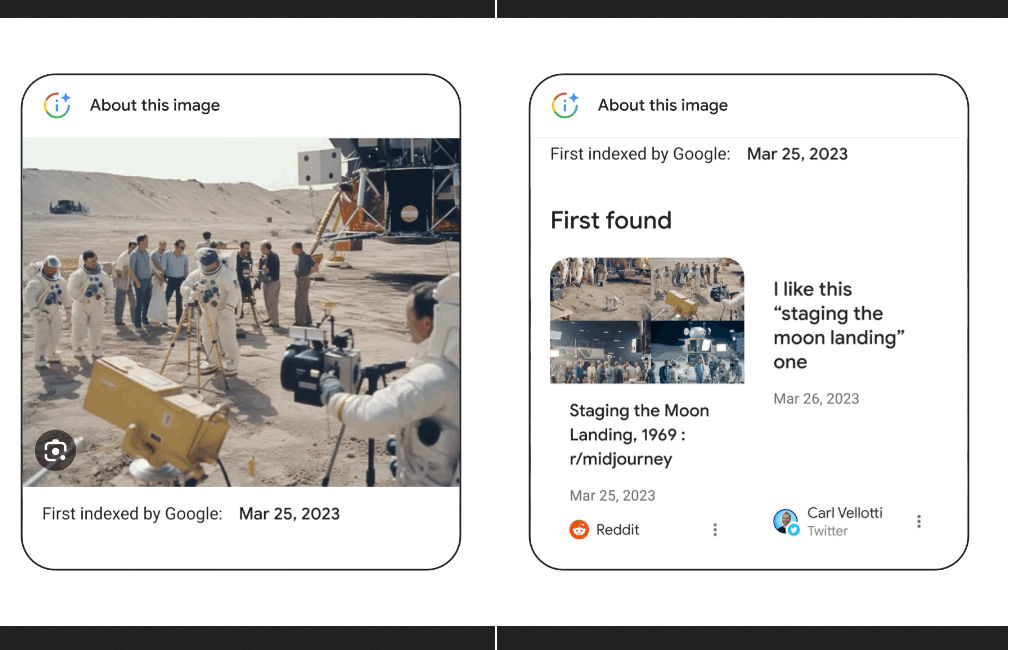

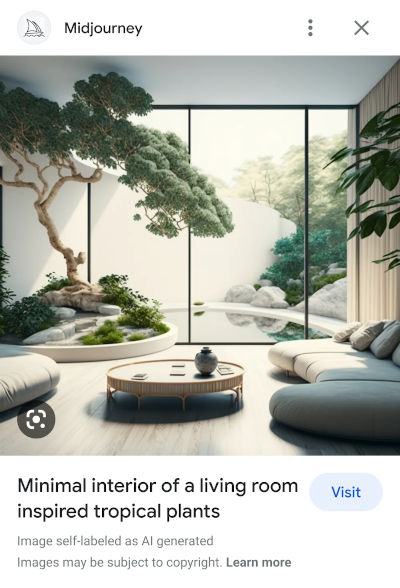

At Google&s developer conference, a lot of things revolved around AI - besides the new language model PaLM 2, a lot of AI-supported tools were presented, which are supposed to assist with various tasks in the future - writing mails and texts, programming, editing photos, creating music, playing games, searching, translating...  About this image Additional information about images.To make it easier to identify fake images, Google will introduce a new feature in image search - "About this image" can be used to retrieve additional information that should make it easier to assess the degree of authenticity of an image. When an image was first indexed by Google and on which page, and where it also appeared, should be able to be looked up there. For example, if a photo has been exposed as fake by recognized news sites, that information will ideally show up here - for anyone who takes the trouble to look there. Identification for AI-generated images.Google also commits to tagging all images created using their new generative tools with an identifier in the metadata. If an image file is used elsewhere, it should be possible to tell from the image that it was generated by AI. Whoever publishes AI-generated images as a website operator should add the metadata tag manually, as it seems. Other services such as Midjourney or Shutterstock are also to introduce this tag in the future, according to Google. An image example shows how this tag is to be included in Google Image Search: as a text note below the image.  Example of labeling in Google Image Search In this form, a label for AI-generated images unfortunately sounds rather toothless - even if it is added automatically by major services, it is not difficult to remove it from the metadata again and feed the image to the Internet without a hint. It is also possible to install the open source generator Stable Diffusion and avoid tagging in this way. It would have been better, but also more difficult, to place it in the image via a watermark. Content Authenticity Initiative - digital guarantee of originBy the way, there is also an attempt from the other direction in terms of image authenticity, namely via We can only hope that one of these approaches - or other ideas - will soon provide reliable solutions for distinguishing artificially generated or manipulated images from authentic shots. deutsche Version dieser Seite: KI-generierte Bilder: Google will Authentizitäts-Check und Kennzeichnung einführen |