[13:22 Wed,26.April 2023 by Thomas Richter] |

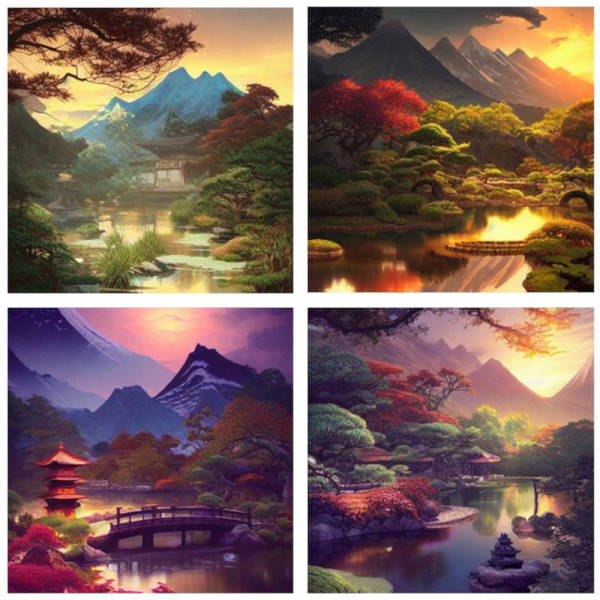

Researchers at Google have optimized Stable Diffusion 1.4 to the point where it takes less than 12 seconds to generate a 512x512 image on a modern smartphone like the Samsung S23 Ultra. Qualcomm had already developed a method in February that only took 15 seconds, but this was limited to Android smartphones equipped with the current Snapdragon 8. By comparison, Google&s new method can be used universally for other diffusion models as well as different hardware configurations, meaning that AI images can soon be generated on a wide range of mobile devices in acceptably short periods of time.  Images generated on smartphone via stable diffusion. Not long ago, the use of such large diffusion models as Stable Diffusion on a smartphone was hardly conceivable, as their size of over 1 billion parameters is too challenging for most devices due to their limited computational and memory resources. As a rule, the complexity and performance of an AI model as well as its computational requirements depend on the number of parameters it has to learn from the training data - the more of them there are, the more complex the model becomes. However, Google was able to achieve a huge speedup by optimizing such diffusion models for mobile GPUs in a whole series of ways. For example, the new GPU-optimized shader can perform several intermediate steps that are normally required to generate images via stable diffusion in a single step. These optimizations reduced the time required to generate an image on a Samsung S23 Ultra and an iPhone 14 Pro by 52% and 33%, respectively, while also significantly reducing memory usage. For example, as the fastest smartphone tested to date, a Samsung S23 Ultra required less than 12 seconds for a 512 x 512 image with 20 iterations using Stable Diffusion 1.4 without int8 quantization.  Images generated on the smartphone via Stable Diffusion This again shows the enormous speed of current AI development: at deutsche Version dieser Seite: Google optimiert Stable Diffusion für Smartphones: Ein Bild in nur 12 Sekunden |