The field of generative AI continues to develop at a rapid pace and Meta has recently emerged as one of the driving forces. One field of particular interest to slashCAM is of course video generation, for which Meta has now introduced a new model called Emu.

Even though generative images can be impressively flawless in themselves, credible animations that appear consistent throughout have not yet been achieved. At the same time, there is also the fundamental problem that changing a text input (a so-called prompt) often changes the entire image content and not just a selective part. Emu now wants to offer new, interesting solutions to both problems at the same time.

Emu Video is based on diffusion models and has a standardized architecture for video generation tasks that can react to a variety of inputs: Text only, image only and both text and image. The process consists of two stages; first the generation of images conditioned on a text prompt, and then the generation of videos conditioned on both the text and the generated image.

Emu Video is based on diffusion models and has a standardized architecture for video generation tasks that can react to a variety of inputs: Text only, image only and both text and image. The process consists of two stages; first the generation of images conditioned on a text prompt, and then the generation of videos conditioned on both the text and the generated image.

In most cases, the generated video is not quite what you had in mind. However, since not everyone has the time or inclination to deal intensively with prompt engineering, it would be much easier if you could just enter change requests afterwards without changing the basic properties of the video. And this is exactly where Emu Edit comes into play.

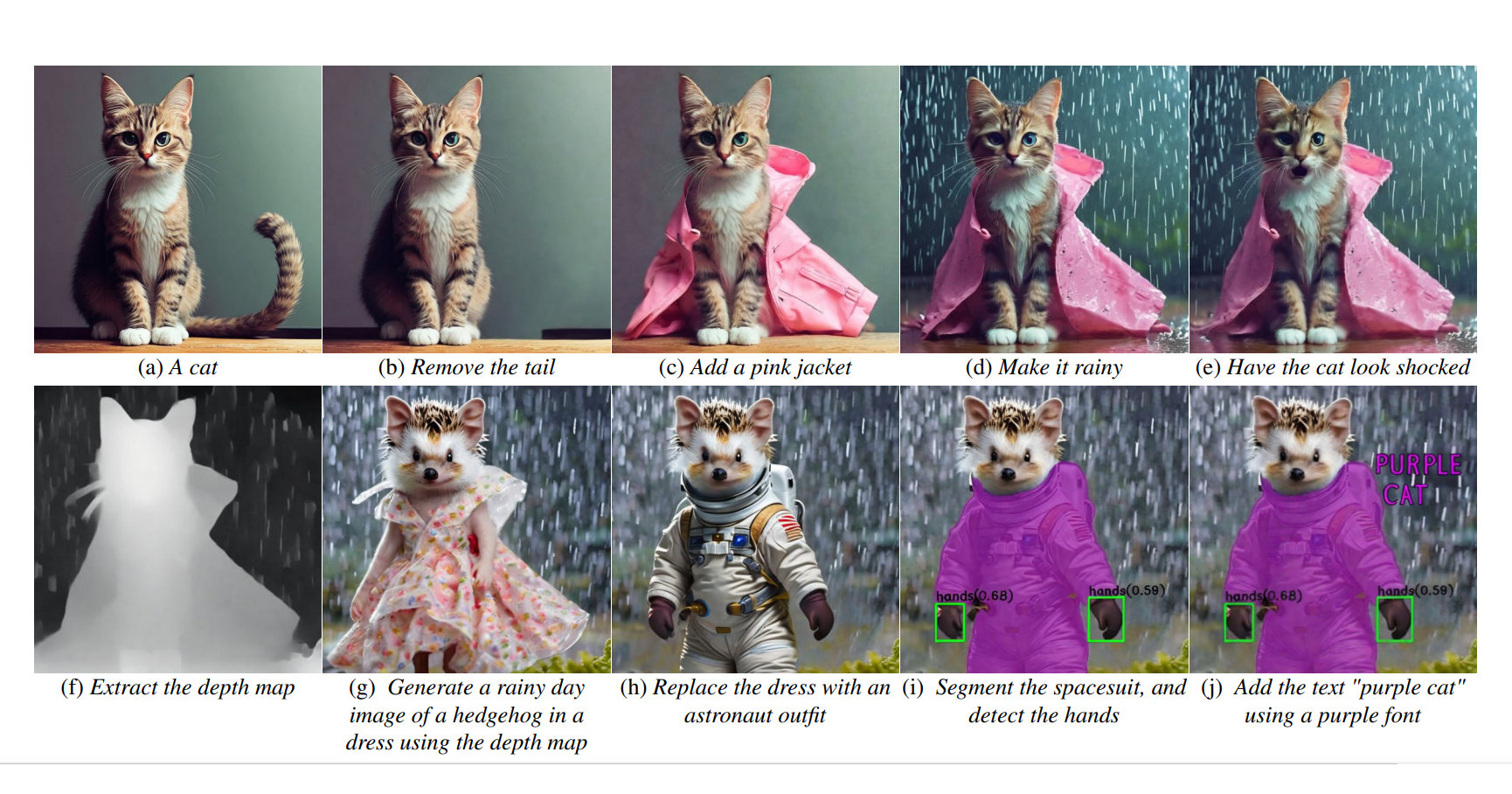

Emu Edit should be able to carry out edits using subsequent instructions. This should enable tasks such as local and global editing, removing and adding a background, color and geometry transformations, recognition and segmentation and much more.

Emu Edit should be able to carry out edits using subsequent instructions. This should enable tasks such as local and global editing, removing and adding a background, color and geometry transformations, recognition and segmentation and much more.

Unlike other models, Emu Edit attempts to only change affected pixels that are relevant to the editing request. In contrast to many generative AI models, Emu Edit follows the instructions as precisely as possible and tries to ensure that pixels in the input image that have nothing to do with the instructions remain untouched.

This has been achieved using a special training data set containing 10 million synthesized samples, each of which contains an input image, a description of the task to be performed and the target output image. Once again, it appears that good data is far more valuable than pure computing power.

Looking at the results, it is fair to say that this is another milestone in AI development. All the videos shown are amazingly consistent temporally and the samples for Emu Edit really leave the basic style of the videos untouched.

As we've mentioned many times before, generative AI for moving images is evolving with seven-mile boots. And the transformation of these models to photorealistic footage is certainly less than 12 months away. With this in mind, get ready for 2024...