[10:50 Thu,18.April 2024 by Rudi Schmidts] |

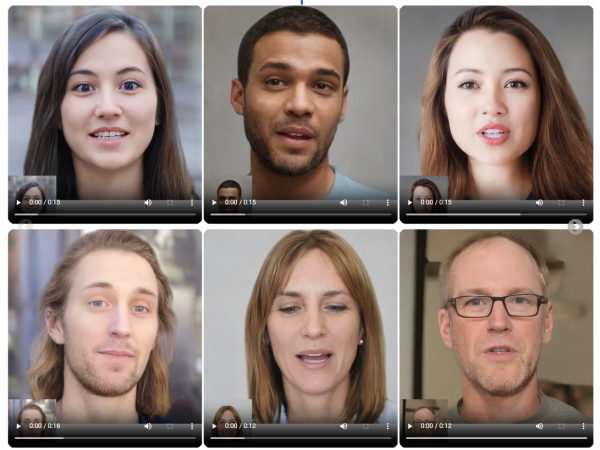

A research group at Microsoft has unveiled a new AI framework called VASA-1 that enables the generation of lifelike, talking faces with strikingly appealing visual capabilities. The framework only requires a static image and a voice audio clip as input.  Microsoft VASA-1 generates animated, realistic video portraits from an audio file The generated videos show a new quality of realistic facial and head movements and can be generated online with a resolution of 512x512 pixels and up to 40 frames per second - with extremely low startup latency. The possibilities for manipulating the direction of gaze, framing and emotions (!!) are also extremely remarkable. The researchers emphasize once again that all portrait images generated are virtual and do not represent real people. They also emphasize that they are aware of the responsibility of using AI and want to highlight the positive potential of their technology for education, accessibility and therapeutic support. An equally enormous potential for the future of interactive, lifelike avatars is also clearly addressed here. The following demonstration shows how VASA-1 could theoretically even be used in real-time video conferencing: However, to prevent misuse, there are currently no plans to release demos, APIs or products until it has been ensured that the technology can be used responsibly and in compliance with regulations. In fact, in many of the examples shown, it is only possible to recognize that these are artificially generated avatars - and not real people - if you look very closely.  Microsoft VASA-1 generates animated, realistic video portraits from an audio file deutsche Version dieser Seite: Microsoft VASA-1 generiert realistische Video-Portraits aus einer Audiodatei |