[12:37 Sat,11.February 2023 by Thomas Richter] |

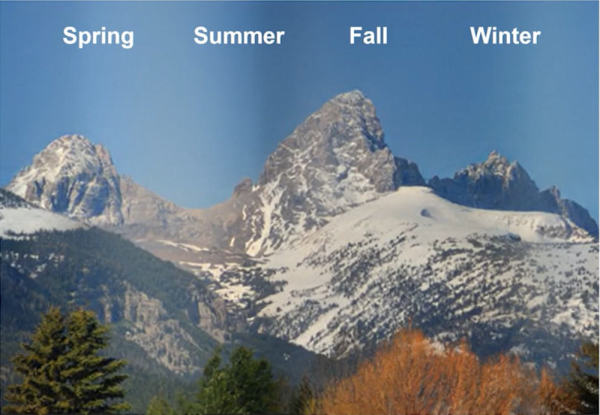

Time-lapse sequences over long periods of time can make changes visible that cannot be seen with the naked eye because they occur too slowly, such as the change of a landscape over the course of the seasons, and thus create magical images. However, such recordings are not perfect when they are made in the wild and are subject to a whole series of uncontrollable influences that interfere with the resulting time-lapse video. For example, the constantly changing lighting of a scene due to the time of day and the weather, including the cast of shadows, often creates an irritating flickering effect. In addition, there are sometimes images that look very different from the preceding or following images - for example, because of cloud layers moving through the image or objects that sometimes stand in front of the camera. Or a whole series of images is missing.  All four seasons at the same time in a time-lapse clip Nvidia&s new AI algorithm now offers a solution for exactly such problems, especially for very long running time-lapse footage that goes over several years (for example archive footage from fixed webcams showing a landscape): it not only cleans up disturbing changes in lighting conditions due to weather changes and the time of day weather, but also other effects and smoothes the whole time-lapse sequence without losing image details. Here is the presentation of the algorithm by 2 Minute Papers: The deep learning algorithm, based on  Program interface The program code including the model is available online and can be tried out, it uses the computing power of graphics cards via CUDA - but some prior knowledge is required. However, Nvidia has often made interesting AI research projects available to the public quite quickly in order to promote its GPUs, so it may be that the above time-lapse algorithm will soon be available in the form of an easy-to-use free tool. Here is the slightly longer video introducing the method: deutsche Version dieser Seite: Schönere Zeitrafferaufnahmen durch neue NVIDIA-KI |