[16:02 Tue,23.August 2022 by Thomas Richter] |

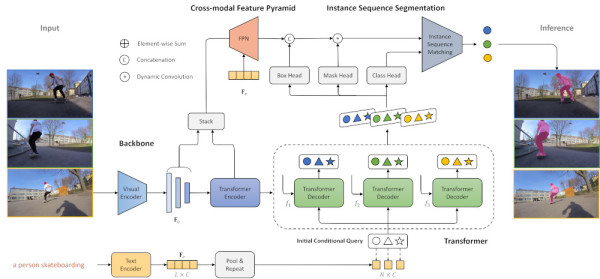

Masking moving objects in videos to edit them separately from all other content used to be an extremely time-consuming job. In the meantime, thanks to new DeepLearning algorithms, it has become easier and easier - often it is no longer even necessary to draw a mask around an object, but rather it is sufficient to set several points in order to select an object, which is then recognized by the algorithm on its own and tracked over the next frames and masked.  A horse that jumps high The new Deep Learning algorithm ("Language as Queries for Referring Video Object Segmentation") from a team at the University of Hong Kong simplifies this work even further: here, the desired object can simply be described by text to select it such as "a horse jumping high jumps": Based on this simple simple description, the object in the video is detected and tracked by a dynamic mask across all subsequent frames. The combined speech and image analysis of the algorithm works so well that the description of the desired object can also be quite complex. For example, the algorithm also identifies an object by its dynamic relationships to other objects or its location in space, such as "the person riding a skateboard." To do this, the AI algorithm performs a whole series of complex tasks from the areas of text and video understanding: first it has to "understand" the input text, then it has to correctly recognize all objects in a video including dynamic relationships, and based on the description given by the user, it has to identify the correct object (including its properties, such as color, and relationships to another object, such as "the tennis racket in the hand of the player with the red shirt").  Then the object must be separated from the background and tracked across all frames in which it appears, forming a dynamic mask from it - even if the object changes its appearance through movement and changes in perspective. Ideally, the mask no longer needs to be adjusted by hand afterwards. Even dynamic actions that span a video sequence are correctly detected in a longer video, such as "the hand giving the dog a ball."  The new algorithm improves upon previous similar MEthodes once again and demonstrates very clearly the complex tasks that can now be accomplished by combining different Deep Learning methods. In its current state, the method could already be used to find specific objects in a video archive together with their relationship to other objects and to extract them. A small further step would, for example, also enable searching by natural speech input, and a larger further step could enable editing objects together with their exchange in a video by speech input.  As always, the corresponding deutsche Version dieser Seite: Bewegte Objekte in Videos können dank neuer KI nur per Beschreibung maskiert werden |