[11:52 Tue,5.January 2021 by Thomas Richter] |

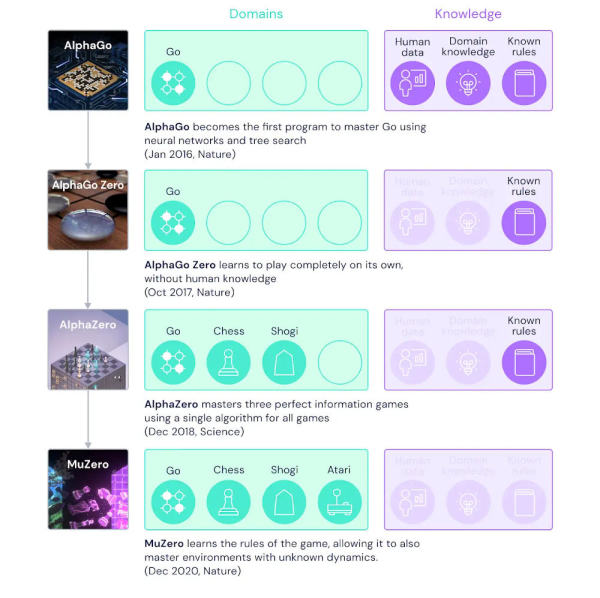

Google&s DeepMind artificial intelligence division is developing a successor called MuZero to its successful AlphaGo/Go Zero/Zero algorithms, which, like them, is based on DeepLearning but can easily be used in entirely new fields due to its universality.  DeepMinds Mu Zero compared with AlphaGo, AlphaGo Zero and AlphaZero. MuZero has learned to play and master dozens of Atari videogames (such as Asteroids, Defendr, PacMan, Chopper Command, Defender) by pure trial and error (after 30 minutes of training, it was already highly superior to a human player in almost every one of the games, and also to most of the programs optimized for it) - the feedback was purely visual by analyzing the pixels of the virtual screen - a highly complex information channel. ÎIn games such as Chess or Go, MuZero already reaches or surpasses the performance of the programs specialized for each game. This means that MuZero must first create a model of the environment of the specific task, or an understanding of how it works, and then solve the given task optimally within the limits of that model. Particular emphasis in its design was placed on the ability to plan ahead, to this end MuZero models especially those aspects of its environment that are important to decision making. Typically for DeepLearning algorithms it can only be guessed what kind of model is made of the respective task including the environment, in many iteration cycles such an algorithm optimizes its approach based on the feedback - but what exactly it does is hidden in a black box, more precisely the weighting of the interconnected layers of artificial neurons that generate actions from the input (such as the pixels of a screen) that result in either a success or failure. Depending on the result, the algorithm then further optimizes its "solution strategy." Video Compression by MuZero. Currently MuZero is working on a topic that is extremely important for working with video, the compression of video. The special thing about this is that MuZero does not build on older algorithms as much as possible like all previous compression algorithms and tries to improve them bit by bit in different places, but tackles the problem without any prior knowledge. For Google, video compression is very relevant because YouTube delivers huge amounts of video and any further optimization of compression means less traffic / storage and thus costs. A big advantage of a new method developed by MuZero would be that it would probably not be subject to any patents, since probably MuZero develops its own methods without historical templates. In any case, initial trials of video compression via MuZero are very promising, according to Google, and show that significant improvements can indeed be achieved. When or how Google might deploy a new process via MuZero, Google has not announced, only that more details will be announced in the new year. In any case, we are eager to see the results - but expect the use of such procedures in the medium rather than the short term.  deutsche Version dieser Seite: MuZero: Googles DeepMind KI wird Videokompression für YouTube optimieren |