[10:59 Fri,24.June 2022 by Thomas Richter] |

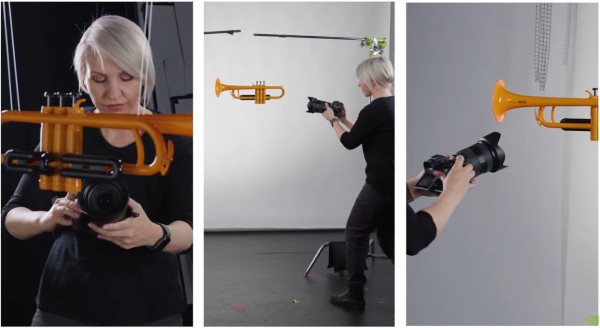

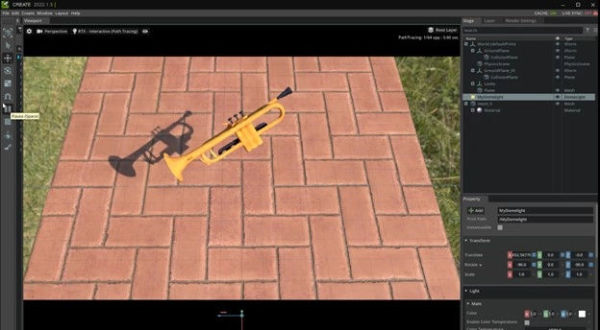

A team of researchers at Nvidia has developed a new algorithm that makes it easy to create a 3D model of an object from a series of photos of it. Dubbed "Nvidia 3D MoMa," the algorithm could allow filmmakers, architects, designers, FX specialists, or even game developers to quickly import an object into a graphics engine such as Unreal or a 3D program to work with it, change the scale, change the material, experiment with different lighting effects, or use it in a 3D scene.  How Nvidia 3D MoMa Works.The new method has the advantage over others of not only being very fast, but also of being able to export the resulting 3D models in the form of a 3D mesh with textured materials, a common format that is compatible with existing 3D graphics engines and 3D modeling software. The pipeline reconstruction includes three features: a 3D mesh model, materials and lighting. The mesh is a kind of papier-mâché model of a 3D shape made up of triangles. It allows developers to modify an object to fit their creative vision. Materials are 2D textures that are placed over the 3D meshes like a skin. And thanks to Nvidia 3D MoMa&s assessment of how the scene is lit, developers can later change how objects are lit. The 3D model exported by Nvidia 3D MoMa consists of three components: the 3D mesh model made up of triangles, the parameters for the textures that make up the object, and the parameters for how the scene is lit. This comprehensive data makes it easier to use the 3D model in other applications. According to Nvidia, 3D MoMa can create triangle models within an hour using a single Nvidia Tensor Core graphics processor.  Demo using jazz instruments.To demonstrate the capabilities of Nvidia 3D MoMa, the Nvidia team first collected about 100 images of five jazz band instruments - trumpet, trombone, saxophone, drums and clarinet - from different angles. The algorithm then reconstructed 3D models of each instrument from these 2D images, which were displayed as 3D meshes. The Nvidia team then took the instruments out of their original scenes and imported them into the Nvidia Omniverse 3D simulation platform for processing. In any conventional graphics engine, the texture of an Nvidia 3D MoMa-generated shape can be easily replaced - the team did this with the trumpet model, for example, replacing the original plastic with with gold, marble, wood or cork.  For demonstration, the Nvidia team dropped the instruments into a Cornell box - a classic graphics test for rendering quality. They showed that the virtual instruments responded to light just as they would in the real world, with the shiny brass instruments reflecting brightly and the matte drumheads absorbing the light. These new objects, created by inverse rendering, can be used as building blocks for a complex animated scene presented as a virtual jazz band in the video&s finale. If you want to know more, you can find the deutsche Version dieser Seite: Neuer Nvidia Algorithmus erschafft aus Photos eines Objekts ein bearbeitbares 3D-Modell |