[16:42 Mon,9.January 2023 by Thomas Richter] |

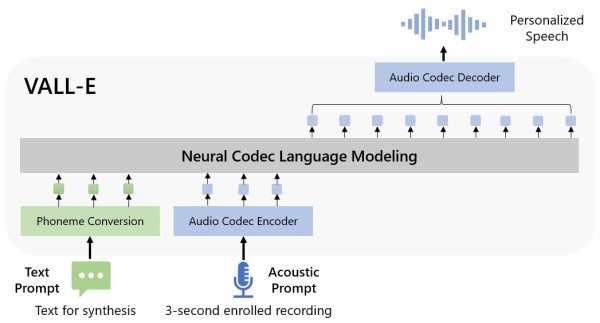

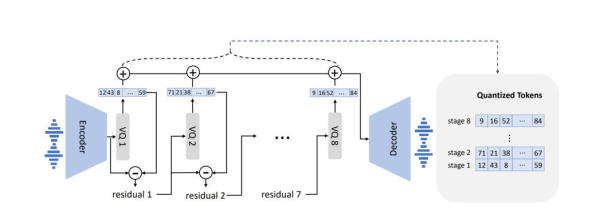

There are already  This is possible due to a large amount of voice recordings VALL-E has been trained on, about 60,000 hours of recordings of about 7,000 different voices in English - since the variations of different voices range within a certain spectrum, when a new voice is to be simulated, VALL-E can simply draw on the learned knowledge of similar voices (and their different characteristics) and thus synthesize the new voice that way. Interestingly, VALL-E uses a Laut OpenAI zeigen die Versuchsergebnisse, dass VALL-E vergleichbare TTS-(Text-to-Speech) System in Bezug auf die Natürlichkeit der Sprache und die Ähnlichkeit der Sprecher deutlich übertrifft. Außerdem kann VALL-E die Emotionen des Sprechers und die akustische Umgebung des akustischen Prompts in der Synthese weitestgehend bewahren. Zudem kann die Sprachausgabe von VALL- E bei gleichem Eingabetext variieren, und so also eine Vielzahl leicht unterschiedlicher personalisierter Sprachproben synthetisieren.

There are many more examples at Many possible applications for voice synthesis.The opportunities of the new technology are as enormous as the risks - due to the only very short voice samples required by VALL-E, its field of application expands significantly once again. It is already possible, for example, when dubbing movies in another language, to use the original voice of the respective actor for a text in another language via speech synthesis. Personal assistants such as Siri or Alexa could also communicate with the user using the voices of any other person, or text messages (whether SMS or Whatsapp) could be read out in the voice of the respective sender. A very practical use is for people who have lost their voice due to a disease (such as people with ALS). They could then talk to others by text input with their own voice - provided of course that old training material of the voice exists.  Neural Audiocodec The danger of manipulation using fake voice.The possibilities for misuse of a voice simulation by VALL-E using very short samples are of course also great - for example, voice recordings could be faked at will in order to discredit someone - be it a well-known politician or a private person - or to put false information into circulation. Likewise, automated advertising calls could be made using the voice of one&s own mother or friend, or an even more convincing version of the infamous deutsche Version dieser Seite: OpenAI VALL-E: Neue KI macht jede Stimme nach - nur anhand von 3s Stimmsample |