[23:10 Mon,6.February 2023 by blip] |

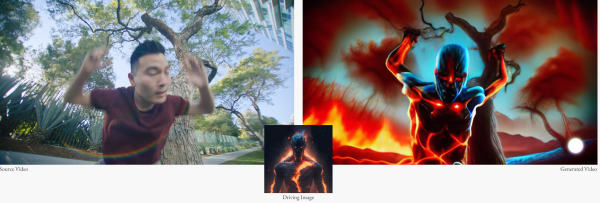

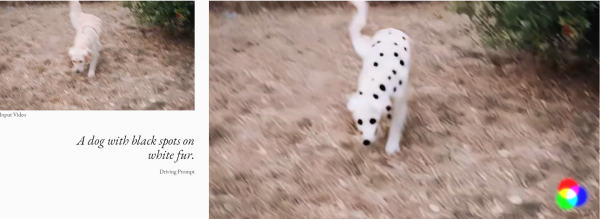

And bang! Here it is, the first market-ready video AI - it&s been in the air for a while. Runway Research, the company behind the image AI Stable Diffusion, has just unveiled the Gen-1 AI system for video generation. As teased in September, starting from a text prompt or sample image, Gen-1 is able to manipulate the style, composition or content of a video. Video-to-video is the name of the approach, since a source video is required. The result looks impressively coherent in the first demo videos. There are five options to choose from at the start. A stylization mode lets you change the style or look of a clip, while a storyboard mode lets you turn a simple moving sketch into a finished animation. A third mode offers mask functionality - objects can be selected and modified by text, for example.  Stylization The fourth mode takes crude 3D models and adds textures to create rendered objects. And finally, in another customization mode, it should be possible to additionally adapt Gen-1 to one&s own needs.  Mask  Render Even if the videos don&t look photorealistic (yet): Gen-1 promises to make a lot of things (not only) in the field of video VFX easier via text-driven and object-based editing. It&s like filming, only without filming, Runway says - No lights. No cameras. All action. However, Gen-1 is not completely public yet, you can currently only apply for early access (for research purposes). deutsche Version dieser Seite: Runway Gen1: Neue Video-KI stilisiert Videos, maskiert Objekte, rendert 3D-Modelle |