[19:58 Mon,20.March 2023 by Thomas Richter] |

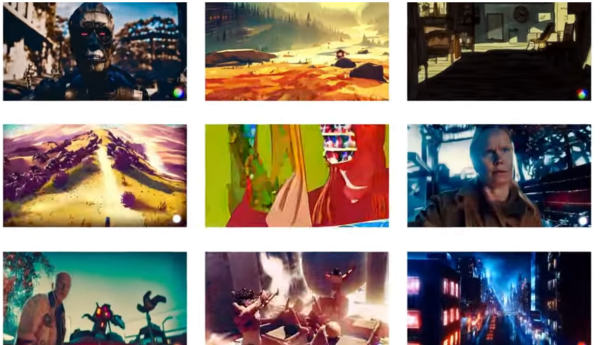

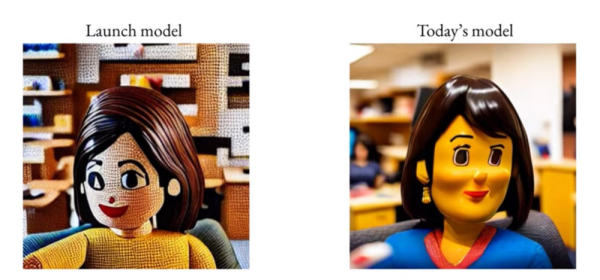

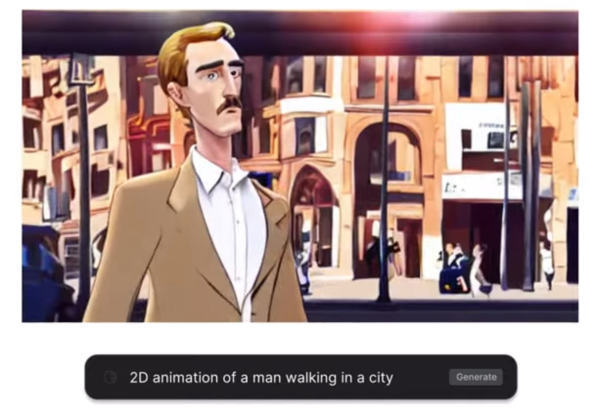

Runway Research, the company behind the image AI Stable Diffusion, has just announced the new video AI Runway Gen2, whose capabilities significantly exceed those of the first version (  Runway Gen 2 sample clips Prompt: "Aerial drone footage of a mountain range" Runway Gen 2 builds on the features of Gen 1, so in addition to generating video via text prompt, it also offers its features, such as the ability to manipulate the style, composition, or even content of a video based on a text prompt or sample image (similar to the recently shown but less sophisticated In addition, a special stylization mode can be used to change the style or look of a clip via text description, while the storyboard mode can be used to convert a simple moving sketch into a finished animation. A sixth mode provides masking functionality - objects can be selected by text, for example, and modified selectively. In the seventh mode, rough 3D models can be supplemented with suitable text textures to create rendered objects. And finally, in the customization mode, it is possible to adapt Gen-2 specifically to one&s own needs. Prompt: "The late afternoon sun peeking through the window of a New York City loft." The Runway Gen 2 video also demonstrates how steadily Gen 1 image quality has improved since launch. And indeed, the videos generated via Gen 2 are of very high quality: the otherwise notorious inconsistency of subjects from frame to frame has been improved, as has the quality of composition and general rendering, which now has very few image errors.  Improvements in Runway Gen 1 quality over the course of a few weeks. Compared to  deutsche Version dieser Seite: Runway Gen2: Stable Diffusion Schöpfer stellen neue Text-to-Video-KI vor |