[13:41 Mon,20.March 2023 by Thomas Richter] |

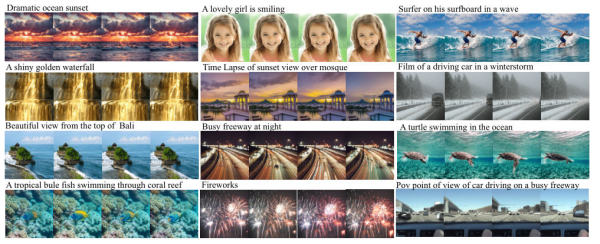

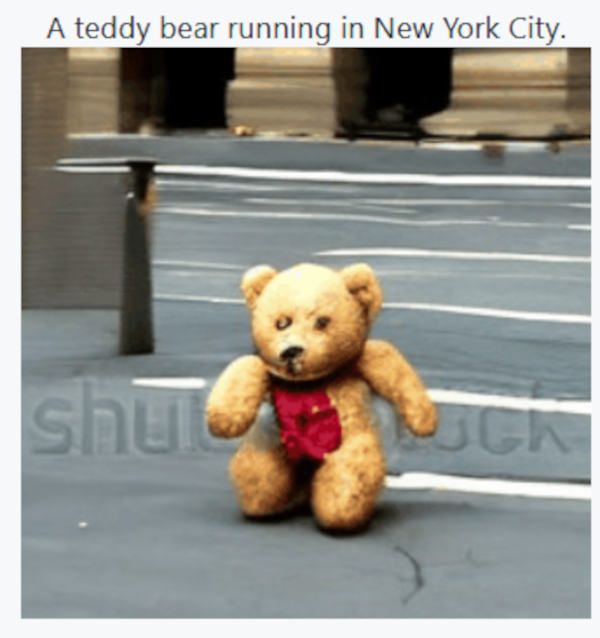

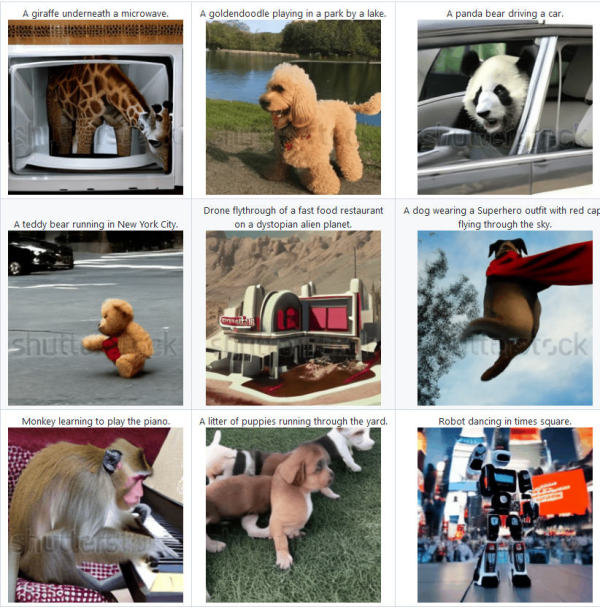

A Chinese research team has published a new text-to-video AI with 1.7 billion parameters, i.e. it can only generate video via text input. Similar algorithms have already been presented by Meta with  Generating videos on your own PC.Those who have some experience in configuring AI algorithms including models can try VideoFusion on their own PC - however, a high-performance GPU with at least 16 GB VRAM or 8 GB with half accuracy as well as 16 GB RAM is still a prerequisite. On an Nvidia RTX 3090 graphics card, generating a xxx second clip takes about 23s. Here is a Alternatively, there is already a Monkey learning to play the piano:  Limitations.The researchers themselves restrict that VideoFusion cannot produce perfect movie and TV quality, nor can it produce text in the video. Only English is supported as an input language at the moment. It is also obvious that the image quality and resolution (128x 128) is still relatively low and reminiscent of the early days of image generation by AI - but experience in the field of AI shows how fast progress is there, especially if the associated program and model - as was already the case with Robot dancing in times square: At the moment VideoFusion also uses only 1.7 billion parameters - for comparison: DALL E2, which can only generate images, was trained with more than 10 billion parameters, so there is still a lot of room for further quality improvements just by using more parameters.  Shutterstock logo in generated videos Copyright by Shutterstock?It is noticeable that on a large part of the  It will be interesting to see how Shutterstock reacts to such videos, which are potentially legally contestable - on the one hand for unauthorized use of Shutterstock images as training material and on the other hand for unauthorized and potentially business-damaging use of the logo. deutsche Version dieser Seite: VideoFusion: Erste Open Source Video-KI ist da - und läuft auch auf dem Heim-PC |